此篇記錄如何開啟一個新Visual Studio專案,並且將Caffe以函式庫的方式新增加入至新專案,如此一來,即可使用Caffe訓練完後之模型以及Caffe中的辨識程式來進行應用,此範例是將訓練好之人臉辨識模型以及Caffe辨識函式於新專案實現,以下有詳細的步驟說明。

開啟新的Visual Studio專案並加入Caffe函式庫。

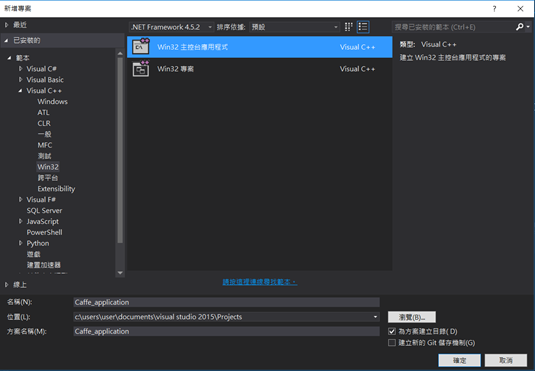

(此處使用Visual Studio 2015為例)

開啟新專案 -> Visual C++ -> Win32主控台應用程式,並點選確定。

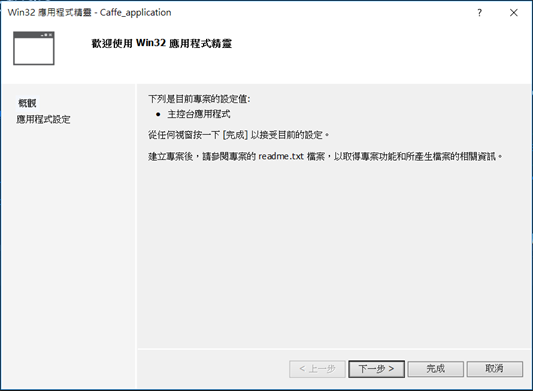

點選下一步。

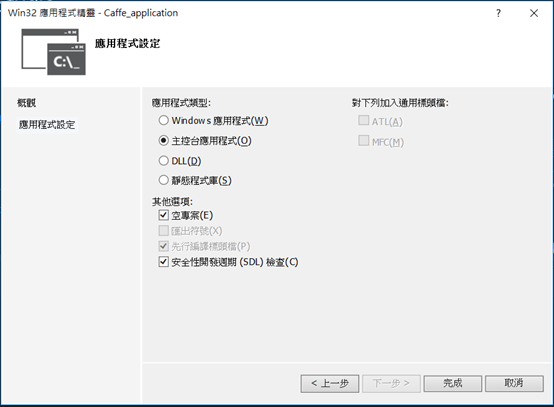

如下圖,選擇空專案,並按完成。

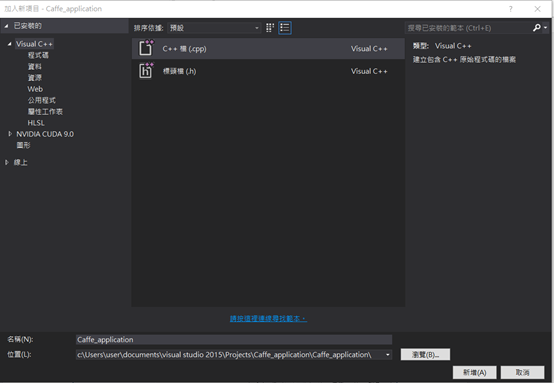

成功開啟專案後,點選專案右鍵 -> 加入 -> 新增項目即可得到下圖,並加入一個.cpp檔。

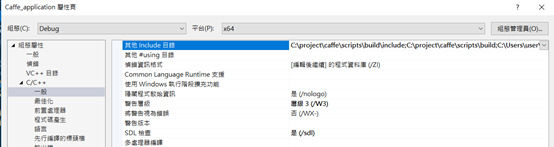

將Caffe函式庫加入新專案,點選專案右鍵 -> 屬性。

(記得組態要選擇Debug、平台選擇x64,如下圖上方)

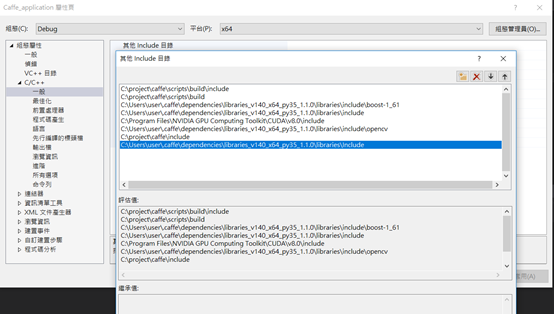

設定C/C++ -> 一般 -> 其他Include目錄。

依照下圖位置進行include

(需視Caffe、CUDA、libraries_v140_x64_py35_1.1.0進行調整)

C:\project\caffe\scripts\build\include

C:\project\caffe\scripts\build

C:\Users\user\.caffe\dependencies\libraries_v140_x64_py35_1.1.0\libraries\include\boost-1_61

C:\Users\user\.caffe\dependencies\libraries_v140_x64_py35_1.1.0\libraries\include

C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v8.0\include

C:\Users\user\.caffe\dependencies\libraries_v140_x64_py35_1.1.0\libraries\include\opencv

C:\project\caffe\include

C:\Users\user\.caffe\dependencies\libraries_v140_x64_py35_1.1.0\libraries\Include

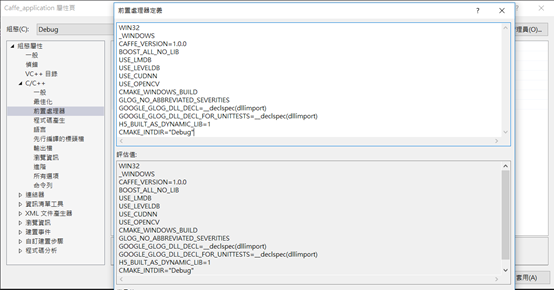

選擇前置處理器,並加入下列指令。

WIN32

_WINDOWS

CAFFE_VERSION=1.0.0

BOOST_ALL_NO_LIB

USE_LMDB

USE_LEVELDB

USE_CUDNN

USE_OPENCV

CMAKE_WINDOWS_BUILD

GLOG_NO_ABBREVIATED_SEVERITIES

GOOGLE_GLOG_DLL_DECL=__declspec(dllimport)

GOOGLE_GLOG_DLL_DECL_FOR_UNITTESTS=__declspec(dllimport)

H5_BUILT_AS_DYNAMIC_LIB=1

CMAKE_INTDIR="Debug"

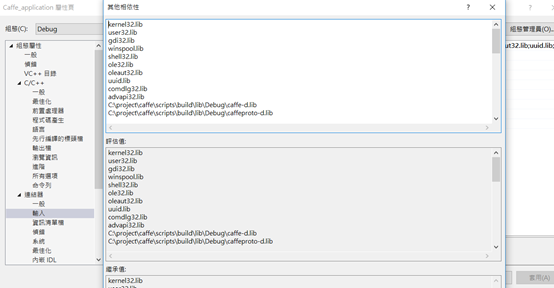

選擇連結器 -> 輸入,並加入以下指令(紅色部分前面需依照路徑修改)。

kernel32.lib

user32.lib

gdi32.lib

winspool.lib

shell32.lib

ole32.lib

oleaut32.lib

uuid.lib

comdlg32.lib

advapi32.lib

C:\project\caffe\scripts\build\lib\\Debug\caffe-d.lib

C:\project\caffe\scripts\build\lib\\Debug\caffeproto-d.lib

C:\Users\user\.caffe\dependencies\libraries_v140_x64_py35_1.1.0\libraries\lib\boost_system-vc140-mt-gd-1_61.lib

C:\Users\user\.caffe\dependencies\libraries_v140_x64_py35_1.1.0\libraries\lib\boost_thread-vc140-mt-gd-1_61.lib

C:\Users\user\.caffe\dependencies\libraries_v140_x64_py35_1.1.0\libraries\lib\boost_filesystem-vc140-mt-gd-1_61.lib

C:\Users\user\.caffe\dependencies\libraries_v140_x64_py35_1.1.0\libraries\lib\boost_chrono-vc140-mt-gd-1_61.lib

C:\Users\user\.caffe\dependencies\libraries_v140_x64_py35_1.1.0\libraries\lib\boost_date_time-vc140-mt-gd-1_61.lib

C:\Users\user\.caffe\dependencies\libraries_v140_x64_py35_1.1.0\libraries\lib\boost_atomic-vc140-mt-gd-1_61.lib

C:\Users\user\.caffe\dependencies\libraries_v140_x64_py35_1.1.0\libraries\lib\glogd.lib

C:\Users\user\.caffe\dependencies\libraries_v140_x64_py35_1.1.0\libraries\lib\gflagsd.lib

shlwapi.lib

C:\Users\user\.caffe\dependencies\libraries_v140_x64_py35_1.1.0\libraries\lib\libprotobufd.lib

C:\Users\user\.caffe\dependencies\libraries_v140_x64_py35_1.1.0\libraries\lib\caffehdf5_hl_D.lib

C:\Users\user\.caffe\dependencies\libraries_v140_x64_py35_1.1.0\libraries\lib\caffehdf5_D.lib

C:\Users\user\.caffe\dependencies\libraries_v140_x64_py35_1.1.0\libraries\lib\caffezlibd.lib

C:\Users\user\.caffe\dependencies\libraries_v140_x64_py35_1.1.0\libraries\lib\lmdbd.lib

ntdll.lib

C:\Users\user\.caffe\dependencies\libraries_v140_x64_py35_1.1.0\libraries\lib\leveldbd.lib

C:\Users\user\.caffe\dependencies\libraries_v140_x64_py35_1.1.0\libraries\lib\snappy_staticd.lib

C:\Users\user\.caffe\dependencies\libraries_v140_x64_py35_1.1.0\libraries\lib\caffezlibd.lib

C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v8.0\lib\x64\cudart.lib

C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v8.0\lib\x64\curand.lib

C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v8.0\lib\x64\cublas.lib

C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v8.0\lib\x64\cublas_device.lib

C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v8.0\lib\x64\cudnn.lib

C:\Users\user\.caffe\dependencies\libraries_v140_x64_py35_1.1.0\libraries\x64\vc14\lib\opencv_highgui310d.lib

C:\Users\user\.caffe\dependencies\libraries_v140_x64_py35_1.1.0\libraries\x64\vc14\lib\opencv_videoio310d.lib

C:\Users\user\.caffe\dependencies\libraries_v140_x64_py35_1.1.0\libraries\x64\vc14\lib\opencv_imgcodecs310d.lib

C:\Users\user\.caffe\dependencies\libraries_v140_x64_py35_1.1.0\libraries\x64\vc14\lib\opencv_imgproc310d.lib

C:\Users\user\.caffe\dependencies\libraries_v140_x64_py35_1.1.0\libraries\x64\vc14\lib\opencv_core310d.lib

C:\Users\user\.caffe\dependencies\libraries_v140_x64_py35_1.1.0\libraries\lib\libopenblas.dll.a

C:\Users\user\Anaconda3\libs\python35.lib

C:\Users\user\.caffe\dependencies\libraries_v140_x64_py35_1.1.0\libraries\lib\boost_python-vc140-mt-gd-1_61.lib

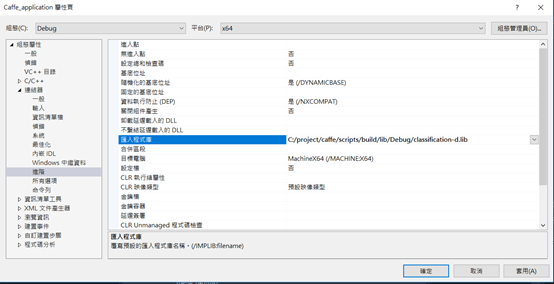

選擇連結器 -> 進階 -> 匯入程式庫,並輸入以下指令。

(需依照路徑修改)

C:/project/caffe/scripts/build/lib/Debug/classification-d.lib

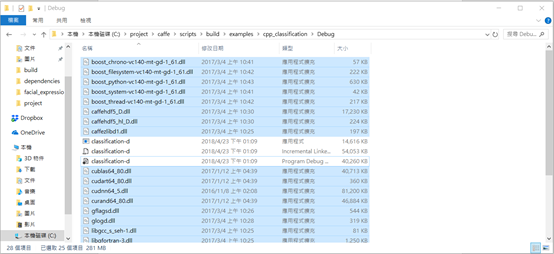

將Caffe中cpp_classification 下的dll檔複製(依造檔案路徑進行複製)。

C:\project\caffe\scripts\build\examples\cpp_classification\Debug

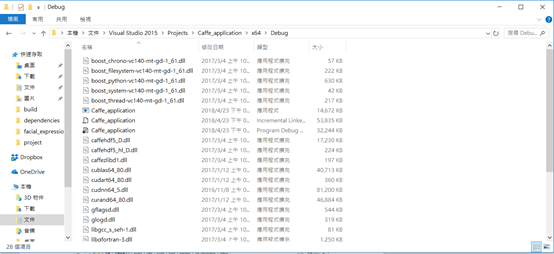

並貼至新改專案中x64/Debug中。

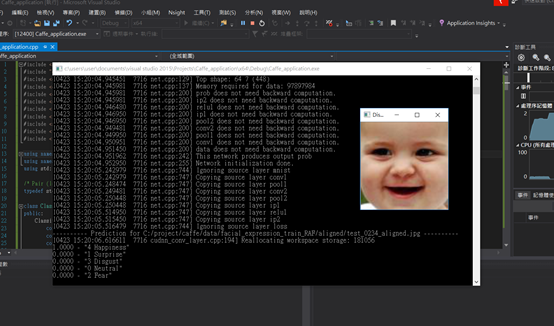

最後將Caffe專案中之classification.cpp程式碼複製至新開專案的.cpp,執行後即可得到下圖之結果,也成功將Caffe函式庫include至新專案。

(上面要選擇Debug以及x64)

classification.cpp

(紅色部分更改為自己路徑)

#include <caffe/caffe.hpp>

#ifdef USE_OPENCV

#include <opencv2/core/core.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/imgproc/imgproc.hpp>

#endif // USE_OPENCV

#include <algorithm>

#include <iosfwd>

#include <memory>

#include <string>

#include <utility>

#include <vector>

#ifdef USE_OPENCV

using namespace caffe; // NOLINT(build/namespaces)

using std::string;

/* Pair (label, confidence) representing a prediction. */

typedef std::pair<string, float> Prediction;

class Classifier {

public:

Classifier(const string& model_file,

const string& trained_file,

const string& mean_file,

const string& label_file);

std::vector<Prediction> Classify(const cv::Mat& img, int N = 5);

private:

void SetMean(const string& mean_file);

std::vector<float> Predict(const cv::Mat& img);

void WrapInputLayer(std::vector<cv::Mat>* input_channels);

void Preprocess(const cv::Mat& img,

std::vector<cv::Mat>* input_channels);

private:

shared_ptr<Net<float> > net_;

cv::Size input_geometry_;

int num_channels_;

cv::Mat mean_;

std::vector<string> labels_;

};

Classifier::Classifier(const string& model_file,

const string& trained_file,

const string& mean_file,

const string& label_file) {

#ifdef CPU_ONLY

Caffe::set_mode(Caffe::CPU);

#else

Caffe::set_mode(Caffe::GPU);

#endif

/* Load the network. */

net_.reset(new Net<float>(model_file, TEST));

net_->CopyTrainedLayersFrom(trained_file);

CHECK_EQ(net_->num_inputs(), 1) << "Network should have exactly one input.";

CHECK_EQ(net_->num_outputs(), 1) << "Network should have exactly one output.";

Blob<float>* input_layer = net_->input_blobs()[0];

num_channels_ = input_layer->channels();

CHECK(num_channels_ == 3 || num_channels_ == 1)

<< "Input layer should have 1 or 3 channels.";

input_geometry_ = cv::Size(input_layer->width(), input_layer->height());

/* Load the binaryproto mean file. */

SetMean(mean_file);

/* Load labels. */

std::ifstream labels(label_file.c_str());

CHECK(labels) << "Unable to open labels file " << label_file;

string line;

while (std::getline(labels, line))

labels_.push_back(string(line));

Blob<float>* output_layer = net_->output_blobs()[0];

CHECK_EQ(labels_.size(), output_layer->channels())

<< "Number of labels is different from the output layer dimension.";

}

static bool PairCompare(const std::pair<float, int>& lhs,

const std::pair<float, int>& rhs) {

return lhs.first > rhs.first;

}

/* Return the indices of the top N values of vector v. */

static std::vector<int> Argmax(const std::vector<float>& v, int N) {

std::vector<std::pair<float, int> > pairs;

for (size_t i = 0; i < v.size(); ++i)

pairs.push_back(std::make_pair(v[i], static_cast<int>(i)));

std::partial_sort(pairs.begin(), pairs.begin() + N, pairs.end(), PairCompare);

std::vector<int> result;

for (int i = 0; i < N; ++i)

result.push_back(pairs[i].second);

return result;

}

/* Return the top N predictions. */

std::vector<Prediction> Classifier::Classify(const cv::Mat& img, int N) {

std::vector<float> output = Predict(img);

N = std::min<int>(labels_.size(), N);

std::vector<int> maxN = Argmax(output, N);

std::vector<Prediction> predictions;

for (int i = 0; i < N; ++i) {

int idx = maxN[i];

predictions.push_back(std::make_pair(labels_[idx], output[idx]));

}

return predictions;

}

/* Load the mean file in binaryproto format. */

void Classifier::SetMean(const string& mean_file) {

BlobProto blob_proto;

ReadProtoFromBinaryFileOrDie(mean_file.c_str(), &blob_proto);

/* Convert from BlobProto to Blob<float> */

Blob<float> mean_blob;

mean_blob.FromProto(blob_proto);

CHECK_EQ(mean_blob.channels(), num_channels_)

<< "Number of channels of mean file doesn't match input layer.";

/* The format of the mean file is planar 32-bit float BGR or grayscale. */

std::vector<cv::Mat> channels;

float* data = mean_blob.mutable_cpu_data();

for (int i = 0; i < num_channels_; ++i) {

/* Extract an individual channel. */

cv::Mat channel(mean_blob.height(), mean_blob.width(), CV_32FC1, data);

channels.push_back(channel);

data += mean_blob.height() * mean_blob.width();

}

/* Merge the separate channels into a single image. */

cv::Mat mean;

cv::merge(channels, mean);

/* Compute the global mean pixel value and create a mean image

* filled with this value. */

cv::Scalar channel_mean = cv::mean(mean);

mean_ = cv::Mat(input_geometry_, mean.type(), channel_mean);

}

std::vector<float> Classifier::Predict(const cv::Mat& img) {

Blob<float>* input_layer = net_->input_blobs()[0];

input_layer->Reshape(1, num_channels_,

input_geometry_.height, input_geometry_.width);

/* Forward dimension change to all layers. */

net_->Reshape();

std::vector<cv::Mat> input_channels;

WrapInputLayer(&input_channels);

Preprocess(img, &input_channels);

net_->Forward();

/* Copy the output layer to a std::vector */

Blob<float>* output_layer = net_->output_blobs()[0];

const float* begin = output_layer->cpu_data();

const float* end = begin + output_layer->channels();

return std::vector<float>(begin, end);

}

/* Wrap the input layer of the network in separate cv::Mat objects

* (one per channel). This way we save one memcpy operation and we

* don't need to rely on cudaMemcpy2D. The last preprocessing

* operation will write the separate channels directly to the input

* layer. */

void Classifier::WrapInputLayer(std::vector<cv::Mat>* input_channels) {

Blob<float>* input_layer = net_->input_blobs()[0];

int width = input_layer->width();

int height = input_layer->height();

float* input_data = input_layer->mutable_cpu_data();

for (int i = 0; i < input_layer->channels(); ++i) {

cv::Mat channel(height, width, CV_32FC1, input_data);

input_channels->push_back(channel);

input_data += width * height;

}

}

void Classifier::Preprocess(const cv::Mat& img,

std::vector<cv::Mat>* input_channels) {

/* Convert the input image to the input image format of the network. */

cv::Mat sample;

if (img.channels() == 3 && num_channels_ == 1)

cv::cvtColor(img, sample, cv::COLOR_BGR2GRAY);

else if (img.channels() == 4 && num_channels_ == 1)

cv::cvtColor(img, sample, cv::COLOR_BGRA2GRAY);

else if (img.channels() == 4 && num_channels_ == 3)

cv::cvtColor(img, sample, cv::COLOR_BGRA2BGR);

else if (img.channels() == 1 && num_channels_ == 3)

cv::cvtColor(img, sample, cv::COLOR_GRAY2BGR);

else

sample = img;

cv::Mat sample_resized;

if (sample.size() != input_geometry_)

cv::resize(sample, sample_resized, input_geometry_);

else

sample_resized = sample;

cv::Mat sample_float;

if (num_channels_ == 3)

sample_resized.convertTo(sample_float, CV_32FC3);

else

sample_resized.convertTo(sample_float, CV_32FC1);

cv::Mat sample_normalized;

cv::subtract(sample_float, mean_, sample_normalized);

/* This operation will write the separate BGR planes directly to the

* input layer of the network because it is wrapped by the cv::Mat

* objects in input_channels. */

cv::split(sample_normalized, *input_channels);

CHECK(reinterpret_cast<float*>(input_channels->at(0).data)

== net_->input_blobs()[0]->cpu_data())

<< "Input channels are not wrapping the input layer of the network.";

}

int main() {

string model_file = "C:/project/caffe/data/facial_expression_train_RAF-3/lenet_deploy.prototxt";

string trained_file = "C:/project/caffe/data/facial_expression_train_RAF-3/snapshot_lenet/_iter_10000.caffemodel";

string mean_file = "C:/project/caffe/data/facial_expression_train_RAF-3/mean.binaryproto";

string label_file = "C:/project/caffe/data/facial_expression_train_RAF-3/synset_words.txt";

string file = "C:/project/caffe/data/facial_expression_train_RAF-3/aligned/test_0234_aligned.jpg";

Classifier classifier(model_file, trained_file, mean_file, label_file); //loading training model

std::cout << "---------- Prediction for "

<< file << " ----------" << std::endl;

cv::Mat img = cv::imread(file, -1);

CHECK(!img.empty()) << "Unable to decode image " << file;

std::vector<Prediction> predictions = classifier.Classify(img);

/* Print the top N predictions. */

for (size_t i = 0; i < predictions.size(); ++i) {

Prediction p = predictions[i];

std::cout << std::fixed << std::setprecision(4) << p.second << " - \""

<< p.first << "\"" << std::endl;

}

imshow("Display window", img);

cvWaitKey(0);

}

#else

int main(int argc, char** argv) {

LOG(FATAL) << "This example requires OpenCV; compile with USE_OPENCV.";

}

#endif // USE_OPENCV

#如有問題歡迎留言討論

留言列表

留言列表