當多人使用同一台伺服器進行開發時,若有人正在使用TensorFlow進行GPU運算且沒有加入以下指令時,會把GPU的內存直接暫滿,導致其他人無法使用,此處紀錄如何限制使用量以及如何查詢使用狀況。

一、在Python或Jupyter上限制TensorFlow使用GPU暫存數量:

1.使用自訂數量的暫存大小:

cfg = tf.ConfigProto() cfg.gpu_options.per_process_gpu_memory_fraction = 0.5 # 使用50%的GPU暫存 session = tf.Session(config=cfg )

2.使用預設最小的暫存大小:

cfg = tf.ConfigProto() cfg.gpu_options.allow_growth = True session = tf.Session(config=cfg )

二、在Python中指定GPU編號使用:

import os os.environ["CUDA_VISIBLE_DEVICES"] = "1" #指定使用編號1的GPU,預設是0

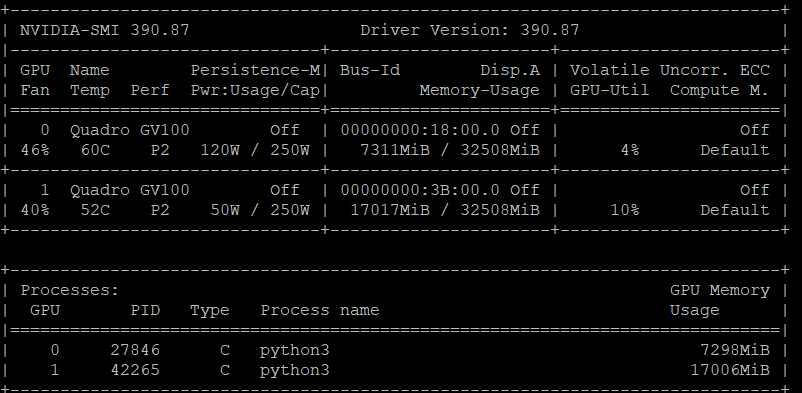

三、查看GPU記憶體以及使用率:

nvidia-smi

輸入後即可看到下圖,包含裝置名稱、記憶體使用狀況以及GPU運算使用率等資訊。

四、實際操作範例

此處使用Mnist手寫辨識進行測試驗證

session = tf.Session(config=cfg)可以替換成with tf.Session(config = cfg) as sess:直接使用

(注意程式中4、5、6、7、61行)

from __future__ import print_function

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

import os

os.environ["CUDA_VISIBLE_DEVICES"] = "1"

cfg = tf.ConfigProto()

cfg.gpu_options.per_process_gpu_memory_fraction = 0.1 # 使用50%的GPU暫存

# number 1 to 10 data

mnist = input_data.read_data_sets('MNIST_data', one_hot=True)

def compute_accuracy(v_xs, v_ys):

global prediction

y_pre = sess.run(prediction, feed_dict={xs: v_xs, keep_prob: 1})

correct_prediction = tf.equal(tf.argmax(y_pre,1), tf.argmax(v_ys,1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

result = sess.run(accuracy, feed_dict={xs: v_xs, ys: v_ys, keep_prob: 1})

return result

def weight_variable(shape):

initial = tf.truncated_normal(shape, stddev=0.1)

return tf.Variable(initial)

def bias_variable(shape):

initial = tf.constant(0.1, shape=shape)

return tf.Variable(initial)

def conv2d(x, W):

# stride [1, x_movement, y_movement, 1]

# Must have strides[0] = strides[3] = 1

return tf.nn.conv2d(x, W, strides=[1, 1, 1, 1], padding='SAME')

def max_pool_2x2(x):

# stride [1, x_movement, y_movement, 1]

return tf.nn.max_pool(x, ksize=[1,2,2,1], strides=[1,2,2,1], padding='SAME')

# define placeholder for inputs to network

xs = tf.placeholder(tf.float32, [None, 784])/255. # 28x28

ys = tf.placeholder(tf.float32, [None, 10])

keep_prob = tf.placeholder(tf.float32)

x_image = tf.reshape(xs, [-1, 28, 28, 1])

# print(x_image.shape) # [n_samples, 28,28,1]

## conv1 layer ##

W_conv1 = weight_variable([5,5, 1,32]) # patch 5x5, in size 1, out size 32

b_conv1 = bias_variable([32])

h_conv1 = tf.nn.relu(conv2d(x_image, W_conv1) + b_conv1) # output size 28x28x32

h_pool1 = max_pool_2x2(h_conv1) # output size 14x14x32

## conv2 layer ##

W_conv2 = weight_variable([5,5, 32, 64]) # patch 5x5, in size 32, out size 64

b_conv2 = bias_variable([64])

h_conv2 = tf.nn.relu(conv2d(h_pool1, W_conv2) + b_conv2) # output size 14x14x64

h_pool2 = max_pool_2x2(h_conv2) # output size 7x7x64

## fc1 layer ##

W_fc1 = weight_variable([7*7*64, 1024])

b_fc1 = bias_variable([1024])

# [n_samples, 7, 7, 64] ->> [n_samples, 7*7*64]

h_pool2_flat = tf.reshape(h_pool2, [-1, 7*7*64])

h_fc1 = tf.nn.relu(tf.matmul(h_pool2_flat, W_fc1) + b_fc1)

h_fc1_drop = tf.nn.dropout(h_fc1, keep_prob)

## fc2 layer ##

W_fc2 = weight_variable([1024, 10])

b_fc2 = bias_variable([10])

prediction = tf.nn.softmax(tf.matmul(h_fc1_drop, W_fc2) + b_fc2)

# the error between prediction and real data

cross_entropy = tf.reduce_mean(-tf.reduce_sum(ys * tf.log(prediction),

reduction_indices=[1])) # loss

train_step = tf.train.AdamOptimizer(1e-4).minimize(cross_entropy)

with tf.Session(config = cfg) as sess:

# important step

# tf.initialize_all_variables() no long valid from

# 2017-03-02 if using tensorflow >= 0.12

if int((tf.__version__).split('.')[1]) < 12 and int((tf.__version__).split('.')[0]) < 1:

init = tf.initialize_all_variables()

else:

init = tf.global_variables_initializer()

sess.run(init)

for i in range(1000):

batch_xs, batch_ys = mnist.train.next_batch(100)

sess.run(train_step, feed_dict={xs: batch_xs, ys: batch_ys, keep_prob: 0.5})

if i % 50 == 0:

print(compute_accuracy(

mnist.test.images[:1000], mnist.test.labels[:1000]))

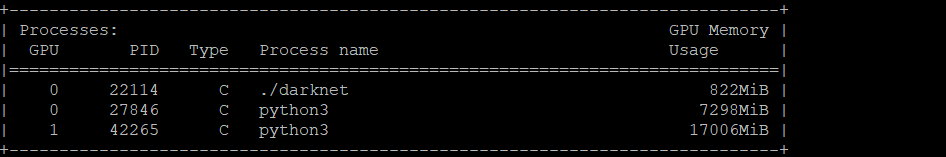

還沒Run上面程式碼時GPU暫存的使用狀況:

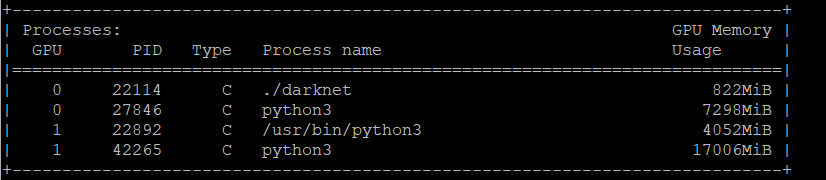

指定使用第1顆,並且使用10%的暫存(該GPU大小為32508M,實際使用會較設定值大,因此為4052M)

(該程式的PID為22892)

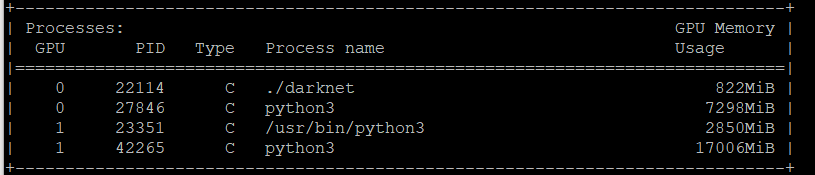

指定使用第1顆,且使用最小暫存(大小為2850M)

(該程式的PID為23351)

文章標籤

全站熱搜

留言列表

留言列表